AI models now shape which brands customers hear about, trust, and choose long before analytics can show you what’s happening. If you are not measuring how they talk about your brand, you are flying blind in the most important discovery channel emerging today.

AI visibility tools give you that clarity. They show when assistants recommend you, cite your content, or surface a competitor. Most work like prompt trackers, you provide the questions your audience actually asks and the tool reports whether you appear in AI generated answers and how often. It’s the AI-era equivalent of rank tracking, and it provides a baseline you can actually improve

Below, we break down the tools that help brands monitor, diagnose, and increase AI visibility and where they meaningfully differ.

What’s Table Stakes for AI Visibility Tools

Most platforms in this space now share a common core. Before looking at differentiators, here’s what you should expect from any serious AI visibility tool:

- Run real prompts across major LLMs (ChatGPT, Gemini, Claude, Perplexity, and others)

- Tracks whether your brand is mentioned, cited, or excluded

- Measures visibility as a percentage of tracked prompts

- Allows competitor comparisons on the same queries

- Shows prompt-level results (not just abstract scores)

- Trend visibility over time so you can see gains or losses

In other words, most platforms now answer the same baseline question:

“When people ask AI about this topic, do we show up?”

Where tools start to diverge is how they help you interpret that data, connect it to performance, and turn it into action. That’s what we focus on below.

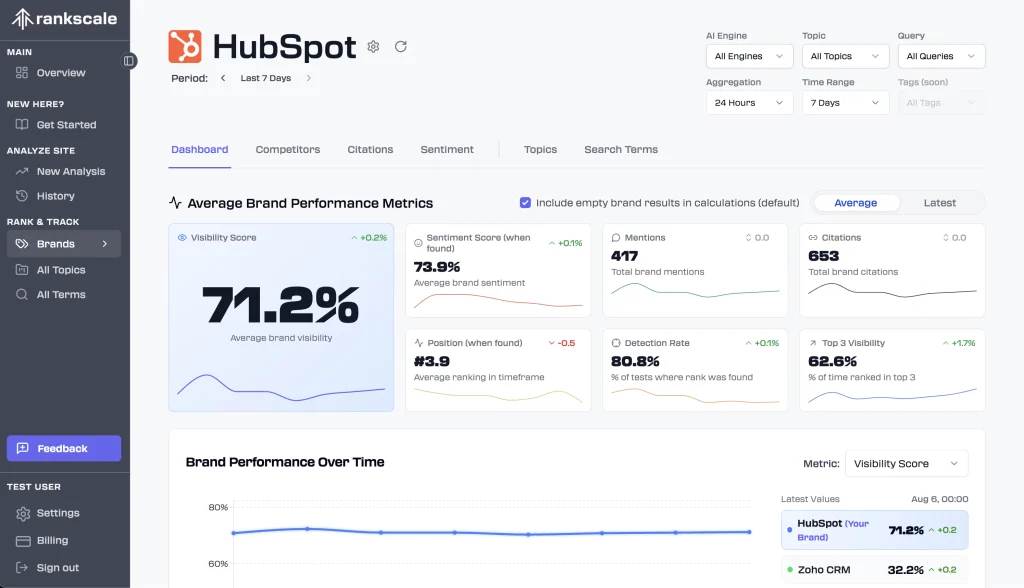

Rankscale

What it does: Diagnoses why your brand is or isn’t included in AI answers and where to focus next.

Rankscale’s key differentiator is its AI Readiness Score, which shifts the conversation from “are we visible?” to “are we referenceable?”. Instead of stopping at visibility metrics, it audits your site for structural and technical factors that affect whether AI systems can confidently use your content in answers.

The audit evaluates content clarity, authority signals, technical setup, and overall answerability, then translates gaps into specific recommendations. Rankscale also suggests search terms you’re statistically more likely to win based on your existing topical footprint, helping teams prioritise effort rather than chase every possible prompt.

For teams managing large prompt sets, Rankscale’s ability to group queries by themes, funnel stages, or campaigns is where the platform becomes especially powerful. That aggregation layer makes it easier to see systemic weaknesses, not just isolated misses.

This makes Rankscale best suited to technically mature SEO teams that want diagnostic depth, not just reporting.

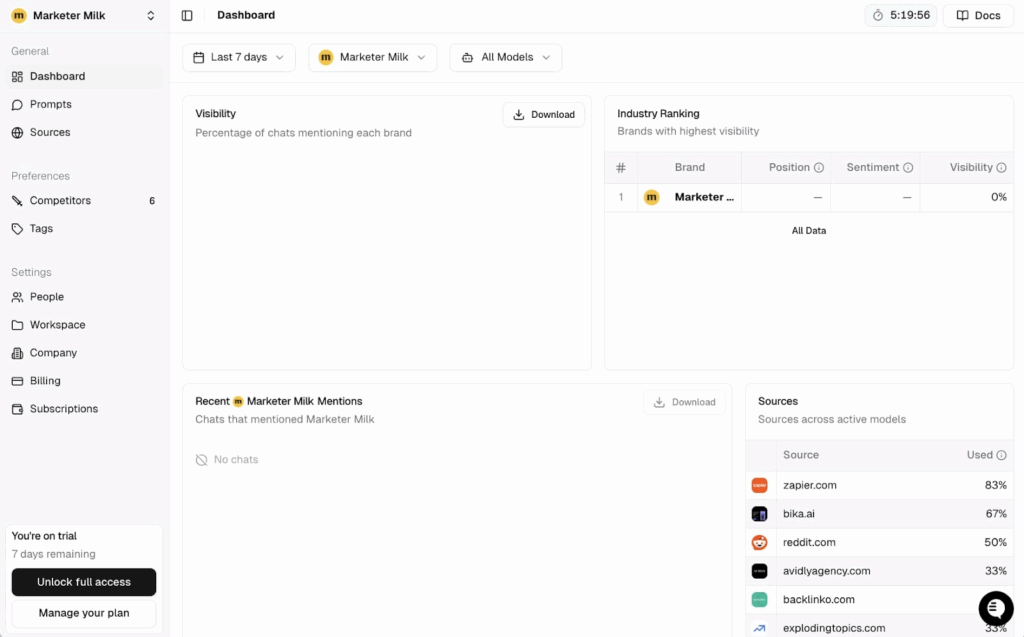

Peec.AI

What it does: Helps brands discover where they should appear in AI answers, not just measure where they already do.

Peec’s strongest differentiator is prompt discovery. Instead of relying entirely on user-defined queries, it actively suggests prompts based on your site’s existing keywords and topical coverage. This is particularly valuable if you’re still mapping how people interact with AI in your category.

The interface prioritises speed and usability. Visibility insights, competitor positioning, and sentiment are presented in clean, export-friendly views, making Peec easy to operationalise across marketing teams without deep SEO expertise.

Peec is most effective for established brands that already have demand and want to expand or defend their presence in AI recommendations, rather than diagnose deep technical issues.

AirOps

What it does: Turns AI visibility gaps into prioritised content and SEO actions.

AirOps intentionally treats visibility tracking as an input, not the end product. Its differentiation is how it operationalises gaps. When your brand is missing from key AI answers, AirOps helps translate that absence into concrete actions: which pages to update, what new content to create, or which external sources to target.

Rather than focusing on granular diagnostics, AirOps excels at answering “what should we do next?” Its workflows are designed to plug visibility insights directly into content and SEO execution, reducing the lag between insight and impact.

While the platform includes broader automation and content capabilities, its relevance here is as a bridge between AI visibility measurement and real-world output.

Profound

What it does: Provides a simplified, executive-level view of AI presence and topic coverage.

Profound differentiates itself through abstraction and stability. It is designed to answer high-level questions about how a brand appears across AI answer engines without requiring deep technical interpretation.

The Conversation Explorer is its most distinctive feature, surfacing the questions people ask AI in your category and highlighting where your brand lacks coverage at a topic level. This makes Profound especially useful for strategic planning and prioritisation rather than page-by-page optimisation.

Profound is best suited to teams that want a clear, repeatable snapshot of AI visibility to guide decision-making, rather than a deeply operational or diagnostic platform.

ScalePost

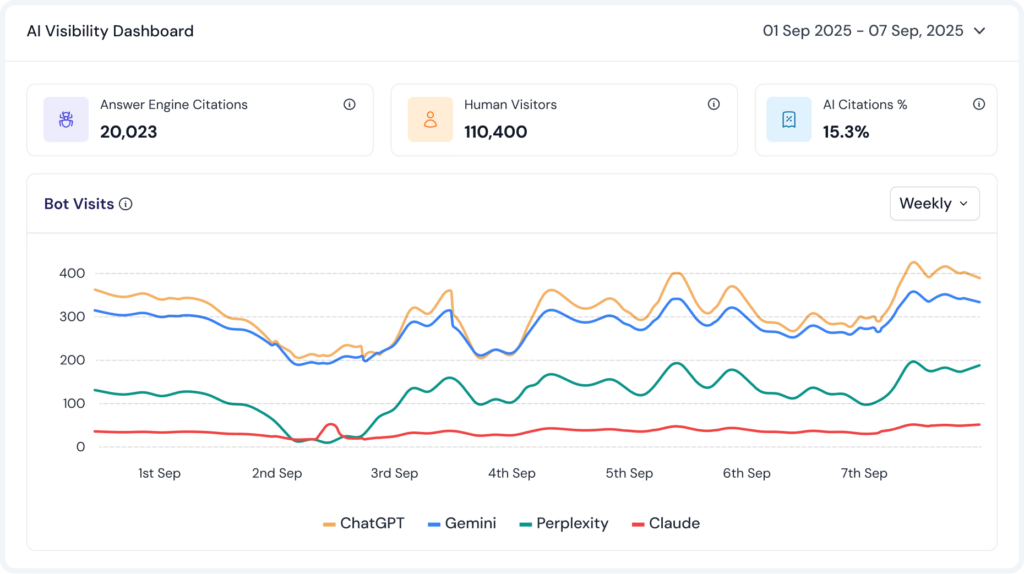

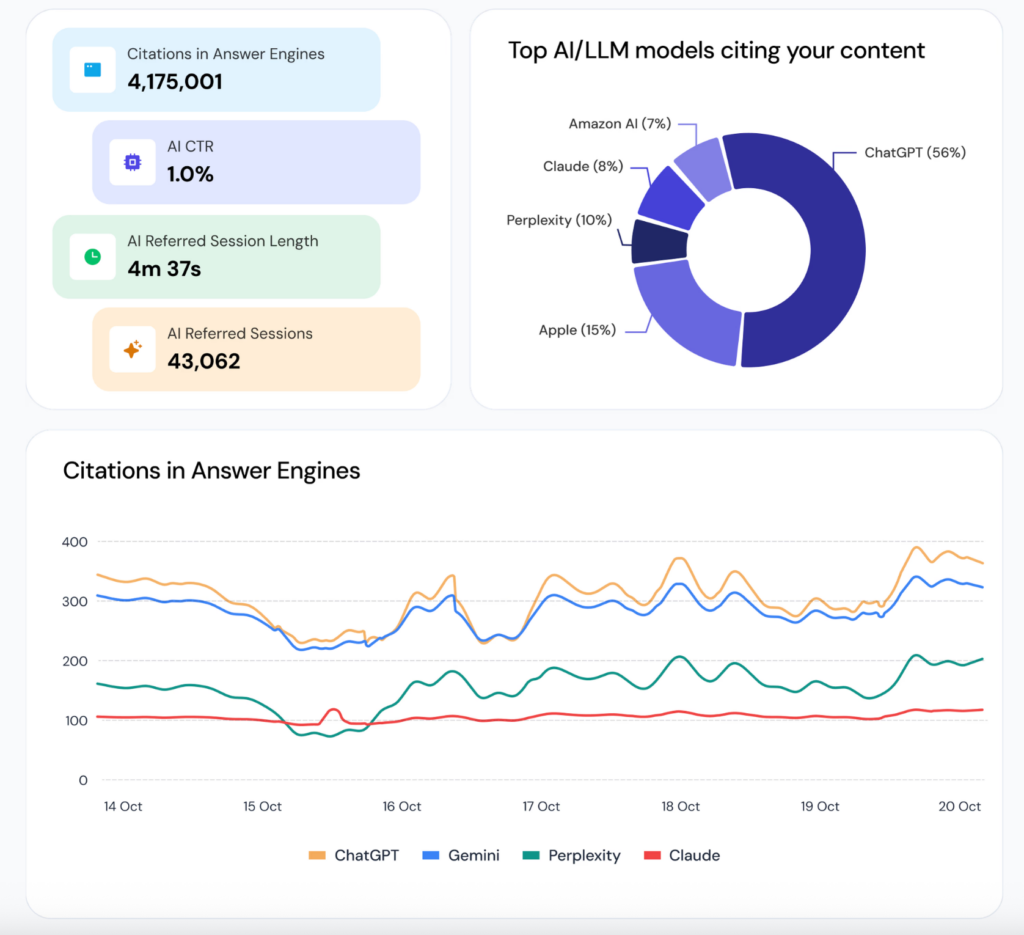

What it does: Measures AI content ingestion and AI-referred traffic using observed crawler and referral data.

Scalepost differentiates itself by measuring AI visibility at the infrastructure and referral layer rather than inferring presence from AI-generated answers. Through a lightweight CDN integration, it captures real traffic from known AI bots and crawlers, identifying which AI systems access a brand’s content and which pages are being ingested.

Beyond ingestion, Scalepost tracks downstream signals such as AI-driven citations, referral traffic, click-through rate, and on-site engagement, broken out by model. This makes it one of the few tools that connects AI exposure to measurable user behaviour, rather than stopping at visibility alone.

Scalepost does not measure ranking or impression share within AI answers. Instead, it is best suited to teams that want to understand whether AI systems are accessing their content and whether that exposure results in meaningful traffic and engagement, either as a complement to answer-level visibility tools or as part of a broader AI readiness strategy.

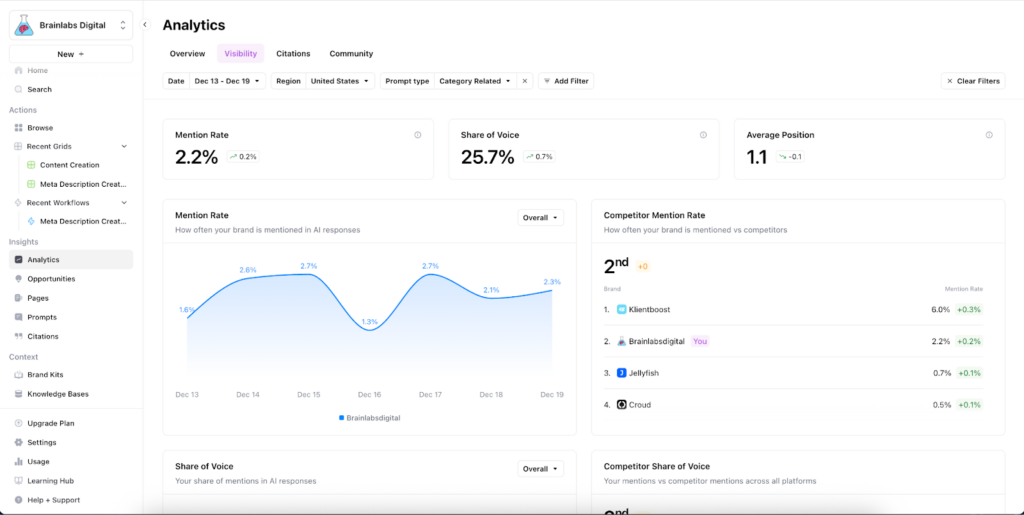

Brainlabs

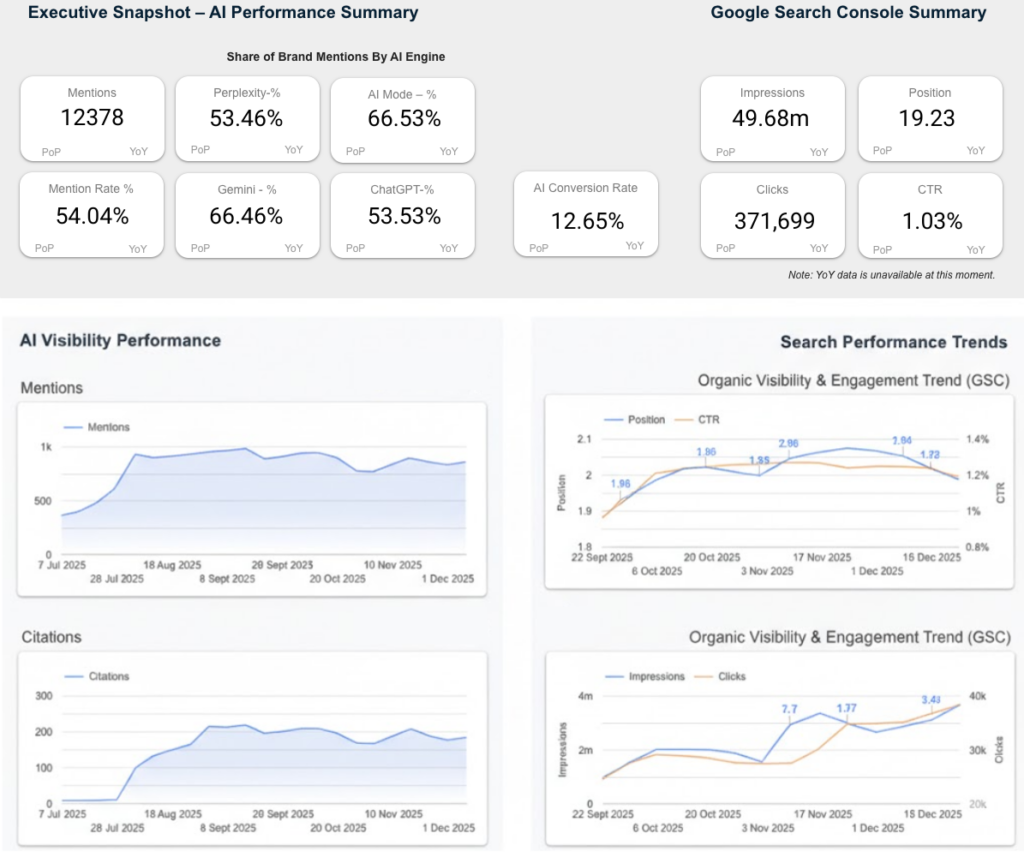

What it does: Connects AI brand visibility to search demand, engagement, and conversion performance in a single executive view.

Brainlabs AI Visibility dashboard goes beyond surface-level visibility tracking by directly linking AI mentions and citations to downstream performance signals. It combines engine-level visibility across AI Mode, ChatGPT, Gemini, and Perplexity with Google Search Console and GA4 data, allowing teams to see how AI presence correlates with impressions, clicks, conversion rate, and app or lead starts over time.

In addition to high-level summaries, the dashboard breaks visibility down by prompt, topic, and AI engine, showing where a brand consistently appears, where citations lag behind mentions, and how coverage differs across models. Weekly trend views make it possible to track momentum, volatility, and alignment between AI visibility and organic demand.

Built for our clients, the dashboard is designed as an executive-facing measurement layer that helps teams move beyond “are we visible?” to “is AI visibility driving meaningful outcomes?”, either as a standalone starting point or as a complement to deeper specialist tools.

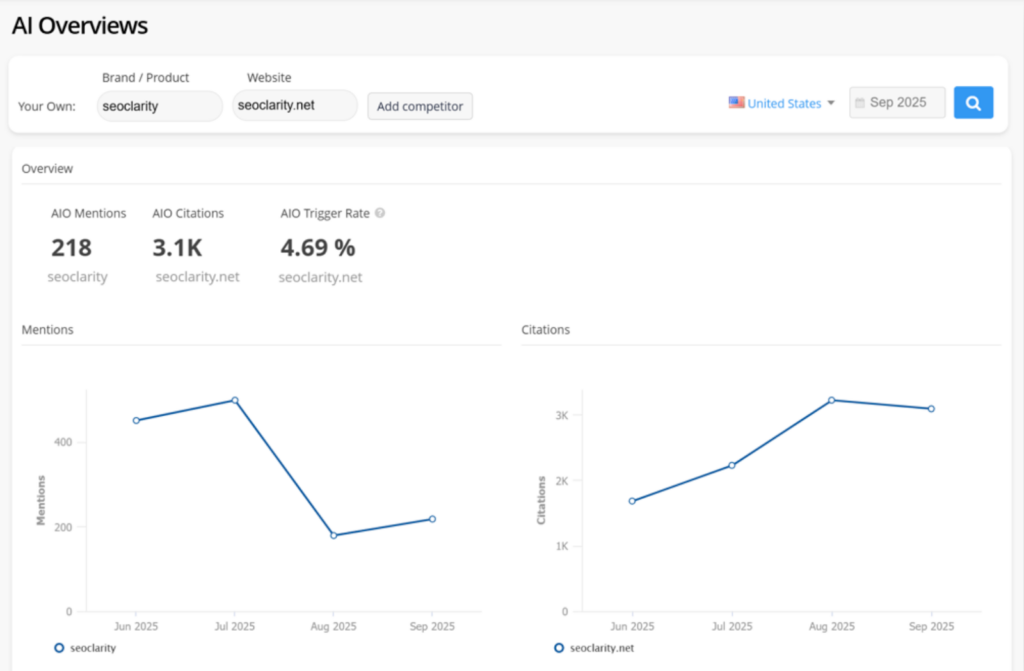

SEO Clarity ArcAI

What it does: Connects AI visibility directly to traffic, engagement, and business impact.

ArcAI’s differentiation lies in performance linkage. Visibility data is not isolated; it is tied to traffic share, engagement metrics, sentiment issues, and even hallucination detection. This allows teams to understand not just whether they appear in AI answers, but whether that visibility actually drives value.

Its AI Search Trends capability, built on analysis of over 100 million prompts, helps teams identify emerging topics early and assess visibility as demand shifts. Combined with prioritised optimisation recommendations, ArcAI is designed for scale and complexity.

This makes it a strong fit for enterprise teams that need AI visibility embedded within a broader measurement and performance framework.

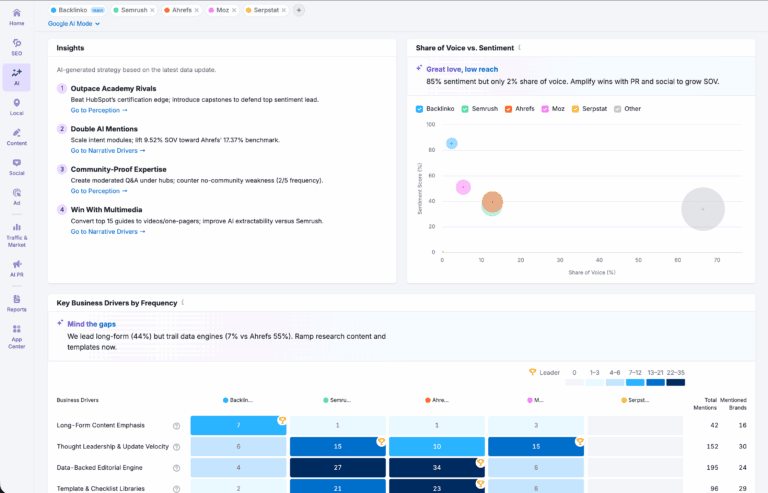

SEMrush

What it does: Explains AI visibility through the lens of traditional SEO signals.

SEMrush’s core differentiation is contextualisation. It frames AI visibility as a downstream effect of web authority, content quality, and brand signals rather than a standalone phenomenon.

By showing which domains and URLs AI systems reference when describing your brand, SEMrush helps teams understand how their broader SEO footprint shapes AI-generated narratives. This makes it particularly valuable for diagnosing misinformation, brand inconsistency, or overreliance on third-party sources.

SEMrush is best for organisations that want AI visibility integrated into an existing SEO-led operating model.

Radarkit

What it does: Shows how AI assistants describe your brand in real chat environments.

Radarkit differentiates itself by prioritising real chat interface behaviour over deeper analytics. It’s designed to give a fast, intuitive sense of how assistants talk about your brand in practice, rather than offering extensive diagnostics or workflows.

This makes it useful as a lightweight monitoring layer or an early-stage validation tool, especially for teams that want minimal setup and quick feedback.

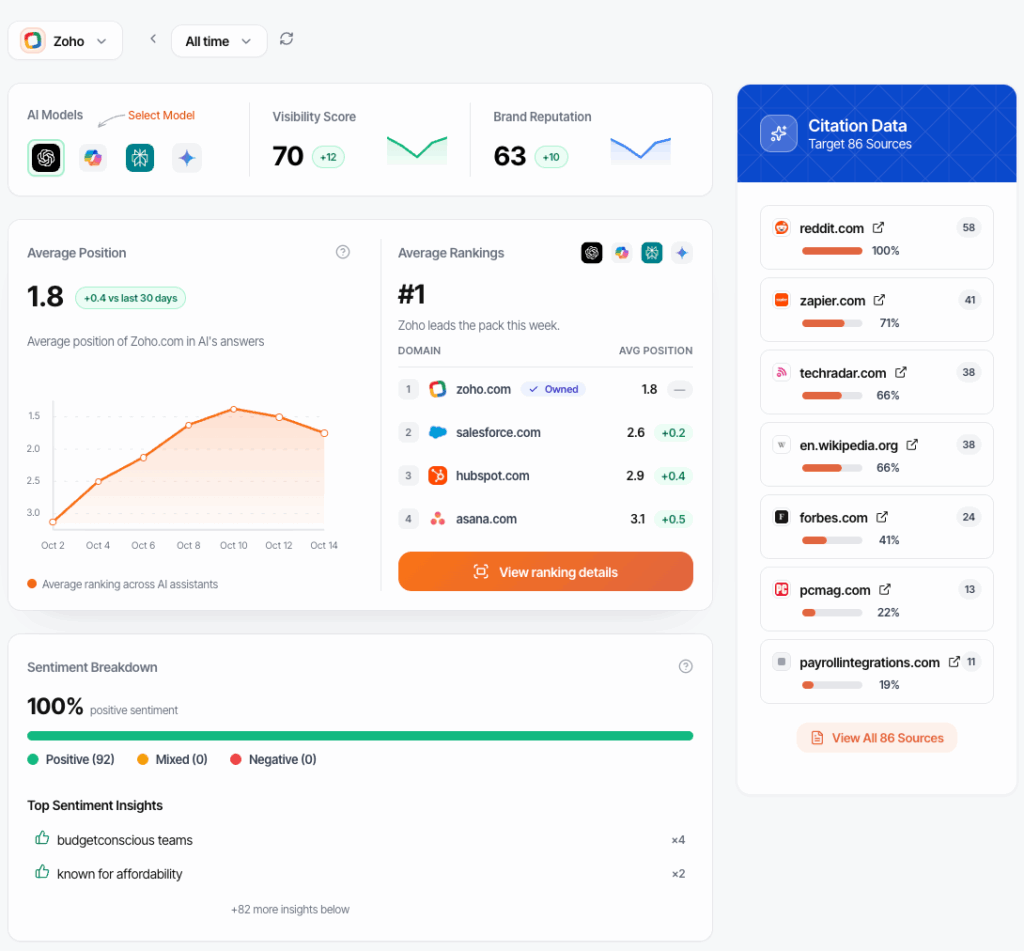

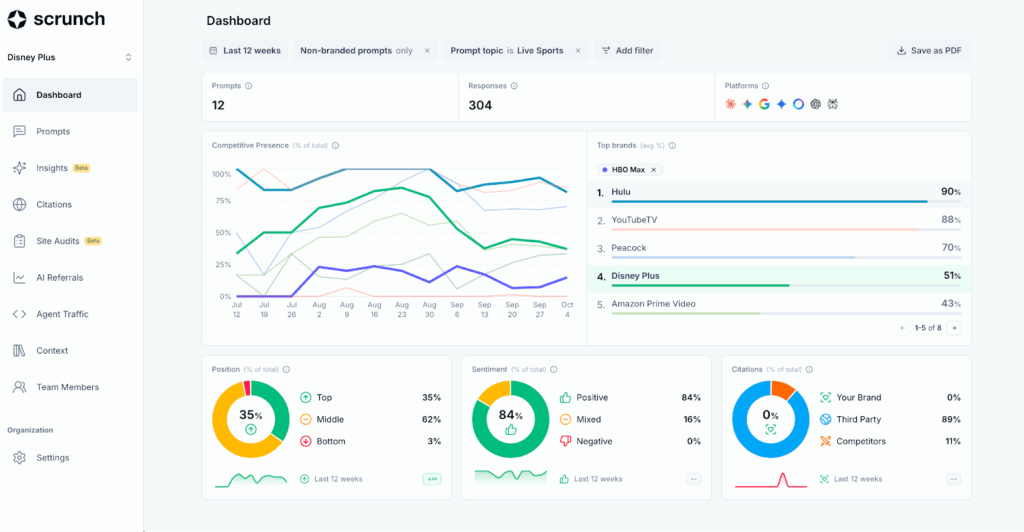

Scrunch AI

What it does: Improves how AI systems interpret and reference your brand’s content through technical implementation.

Scrunch pairs AI visibility tracking with site-level changes that make content easier for AI systems to understand and reuse. Its Agent Experience Platform adds AI-readable structure to your site, helping answer engines more clearly interpret and reference your content.

Combined with topic-level trend analysis and journey mapping, Scrunch helps teams see where AI-driven demand is emerging and whether their brand appears in those conversations. It’s best suited to teams that treat AI discovery as a core acquisition channel and have the technical resources to support implementation.

What to Actually Consider Before You Choose

The tool comparison only matters if you’re clear on what you’re measuring and why. Before you commit, focus on the factors that actually determine whether an AI visibility tool delivers value:

Engine Coverage: Track where your buyers evaluate options not every model available. ChatGPT and Perplexity matter for research-heavy queries, Google’s AI Overviews and AI Mode for SERP-adjacent discovery, and others only if your audience actually uses them.

Sampling Cadence: Weekly tracking is enough for most brands. Daily monitoring only makes sense for high-risk or fast-moving categories. More data doesn’t equal better insight, it just burns credits.

Citation Transparency: You need to see which sources AI systems cite when mentioning your brand. Without exportable citation data, optimization is guesswork.

From Monitoring to Action: The fastest ROI comes when visibility insights feed directly into content and QA workflows. If your tool stops at dashboards, it’s adding friction, not removing it.

Where to Start

No single tool wins across every use case. The real advantage comes from connecting visibility tracking to content operations.

Start by choosing one tool, adding three to five competitors, and tracking at least ten product- or service-led prompts for thirty days. Patterns will emerge quickly. Like early SEO, AI visibility is a long game authority builds over time.Once you know where you appear and where you don’t, the next step is optimization. Our guide on How to Increase AI Visibility outlines the playbook for turning insight into measurable gains.